When it comes to logging execution information in Integration Services, there are multiple options available. Do you take matters in your own hands and build your own custom logging framework? Or do you let the catalog take care of things?

Can't decide? Check out our guidelines and tips on logging in your SSIS packages and projects.

In this post, two options for logging execution information of an Integration Services package are presented: the Custom Logging framework and the Integration Services Catalog. We'll look how they differ and when we should use which option.

Custom Logging Frameworks

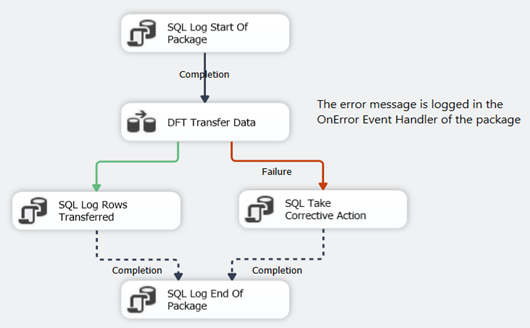

Since the launch of Integration Services (SSIS) with SQL Server 2005, many SSIS developers and their teams have created their own custom “logging framework”, mainly because the built-in logging was not adequate enough at that time. Typically, such a framework logs the start and end time of the package (maybe of the individual tasks as well), the number of rows transferred or updated, the possible error or warning messages and so on. All of this is usually done using Execute SQL Tasks writing data to a table, maybe using event handlers and precedence constraints (the arrows in the control flow).

The advantage of such a framework is control: you decide what is logged and in what format. The downside however is you have to develop the custom framework, maintain it and train people to actually include it in every package. One of the problems with SSIS is the lack of decent templating. If you find a bug in your framework, you can update all of your SSIS packages, one at a time. Another problem is sometimes frameworks start to “drift”. In one project you have a certain version implemented by one developer, but in another project someone else implements a slightly different version of the framework, adjusted to his/her tastes. When a new project is started, which version is the correct one?

The SSIS Catalog

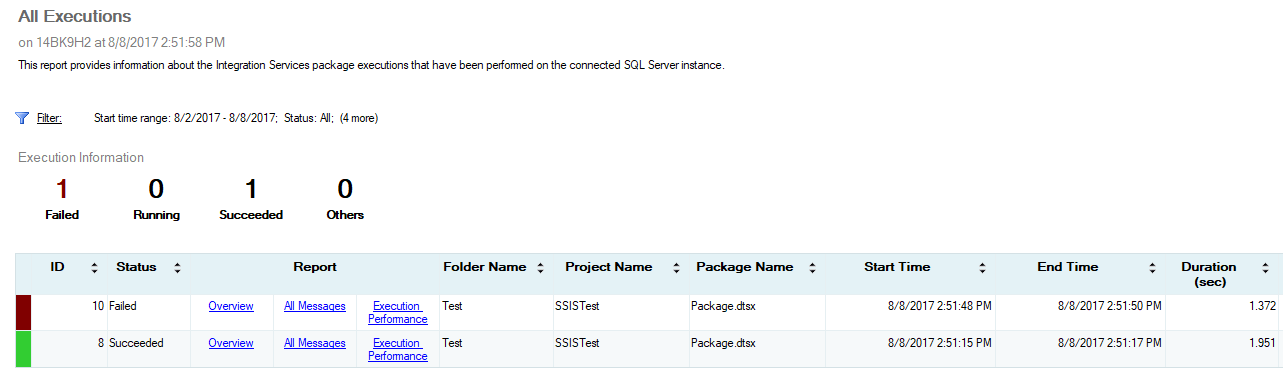

With SQL Server 2012, SSIS got a make-over and the Integration Services catalog was introduced: a centralized repository inside the SQL Server database engine where you can store, execute and monitor your SSIS packages. A nice feature is the built-in logging and reporting; by default a lot of information is logged to the underlying database and there are reports in Management Studio (SSMS) visualizing this data.

The advantages are clear: you don’t have to do a thing; everything is done for you. However, logging too much information can have an impact on the performance of your SSIS package. Furthermore, not everything you might need is logged. A typical example is the number of rows written to a destination. In order to get this number, you have to enable a very detailed level of logging. If you are just interested in row numbers, errors and warnings, you have a massive logging overhead.

What to Use for Logging?

If logging and reporting is already done by the catalog, why would you create a custom logging framework? The short answer: you don’t. You don’t need a complex and a (sometimes) difficult to maintain framework. You let the catalog handle it. The somewhat longer answer: you need to gather all of the logging requirements and check which of the systems can deliver on those requirements. Start simple and expand where needed.

These steps are a useful guideline to configure your logging:

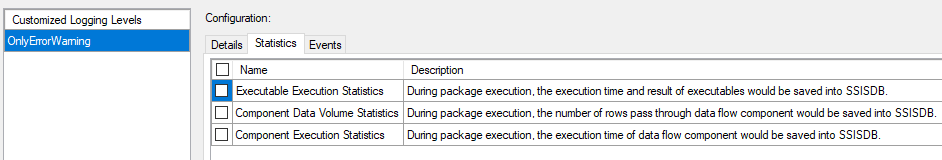

- Basic logging is done in the SSIS catalog. Start and stop time of the packages. Durations of the different tasks. However, don’t use the default logging level (a logging level determines which events are logged to the catalog). This level logs too much information to the catalog, resulting in overhead. Define your own custom logging level instead (provided you are using SQL Server 2016 or later). Start with the OnError and OnWarning events. Make this logging level the default server logging level.

- If you need more events, you can add them to your custom logging level. If you need more performance statistics, you can add those as well.

- If you are happy with the catalog reporting, just use these. If not, you can roll out your own reporting framework using the views provided in the SSISDB database. An example is the SSIS Reporting Pack by Jamie Thomson.

- If you need something that’s not possible with a logging level or it results in too much overhead, implement it with custom logging. A good example are the number of rows transferred in a data flow or the number of lookups failed.

- If you implement custom logging, keep it simple. Don’t try to hide complexity by putting tasks in an event handler. These tasks are hidden from view and make it difficult for new developers to understand your custom logging framework.

- Make the results of the custom logging easily accessible. You can built reports on top of the data and even include them directly in Management Studio. The goal of the logging is people actually taking a look at it.

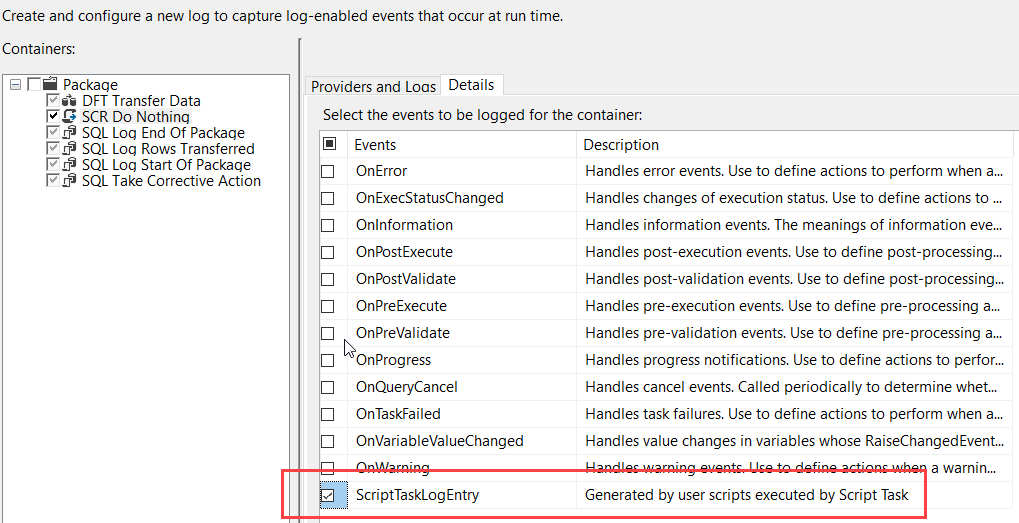

If you’re familiar with SSIS, you might have noticed the package log providers haven’t been mentioned yet. Most of their functionality is now covered by the SSIS catalog. However, there’s one special use case: the logging of the ScriptTaskLogEntry event.

This event is called from inside a script task using the DTS.Log method. It’s currently the only method to log information from within a script task and the only reason to still use the old log providers in a package.

Conclusion

Most logging requirements can easily be handled with the built-in logging provided by the SSIS catalog. Define you own logging level to minimize the overhead induced by the logging. For more specific logging requirements, you might want to expand your logging by adding extra tasks to your packages. You can start from a template to avoid more work, but make sure it has been thoroughly tested. As usual, keep it simple.

If you are interested in the SSIS logging levels, you can find more information in the following articles:

- Integration Services Logging Levels in SQL Server 2016

- Logging Level Recommendations for the SQL Server Integration Services Catalog