What’s one of the most positive things to come out of the COVID-19 lockdown we’ve all found ourselves in across the world? For organizations, it’s how their teams have been organizing themselves to keep their performance level up while not working physically together day after day. Many teams have even managed to increase their efficiency whilst facing whole new challenges, such as helping their kids do schoolwork or simply keeping them busy and entertained.

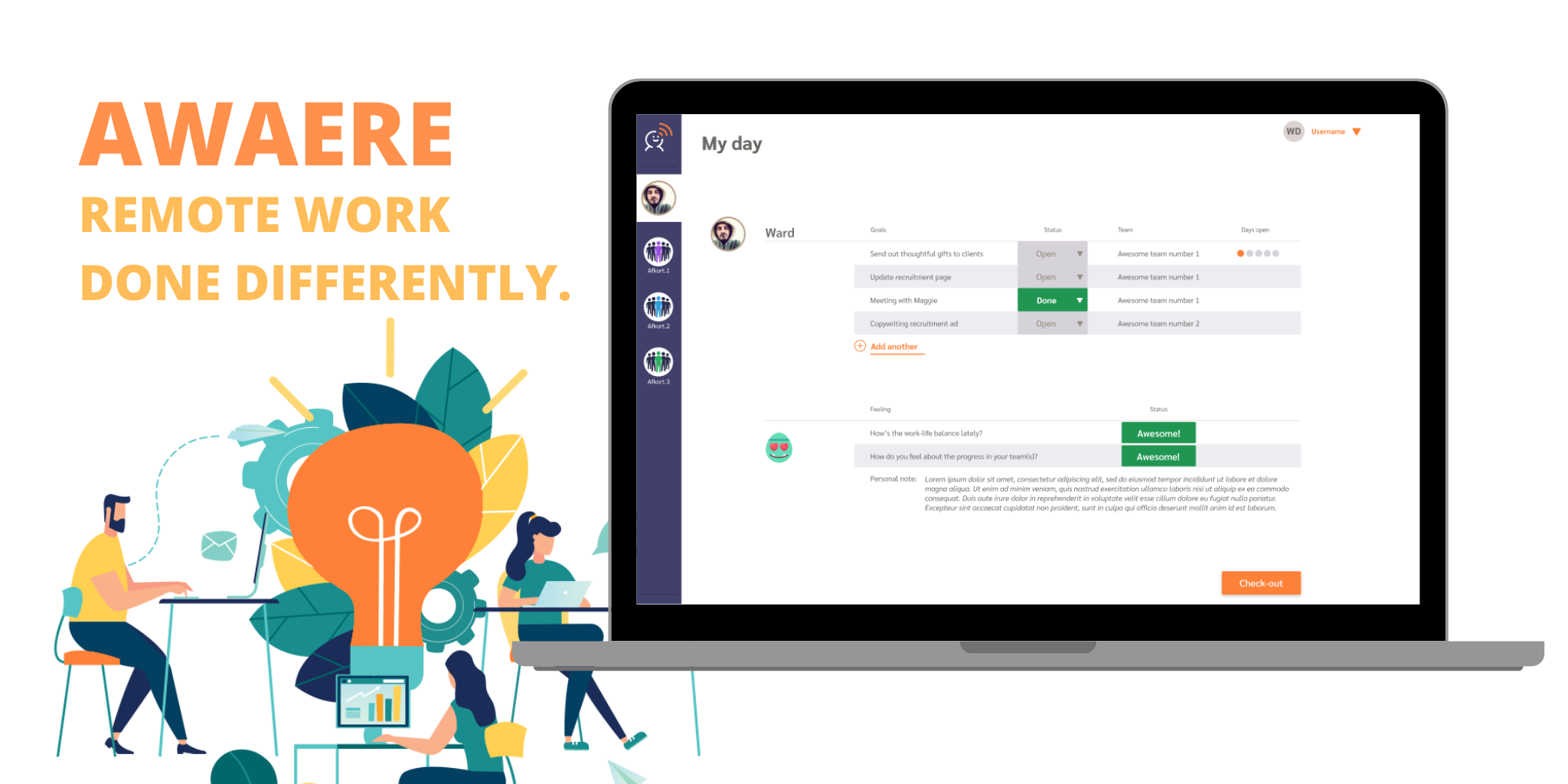

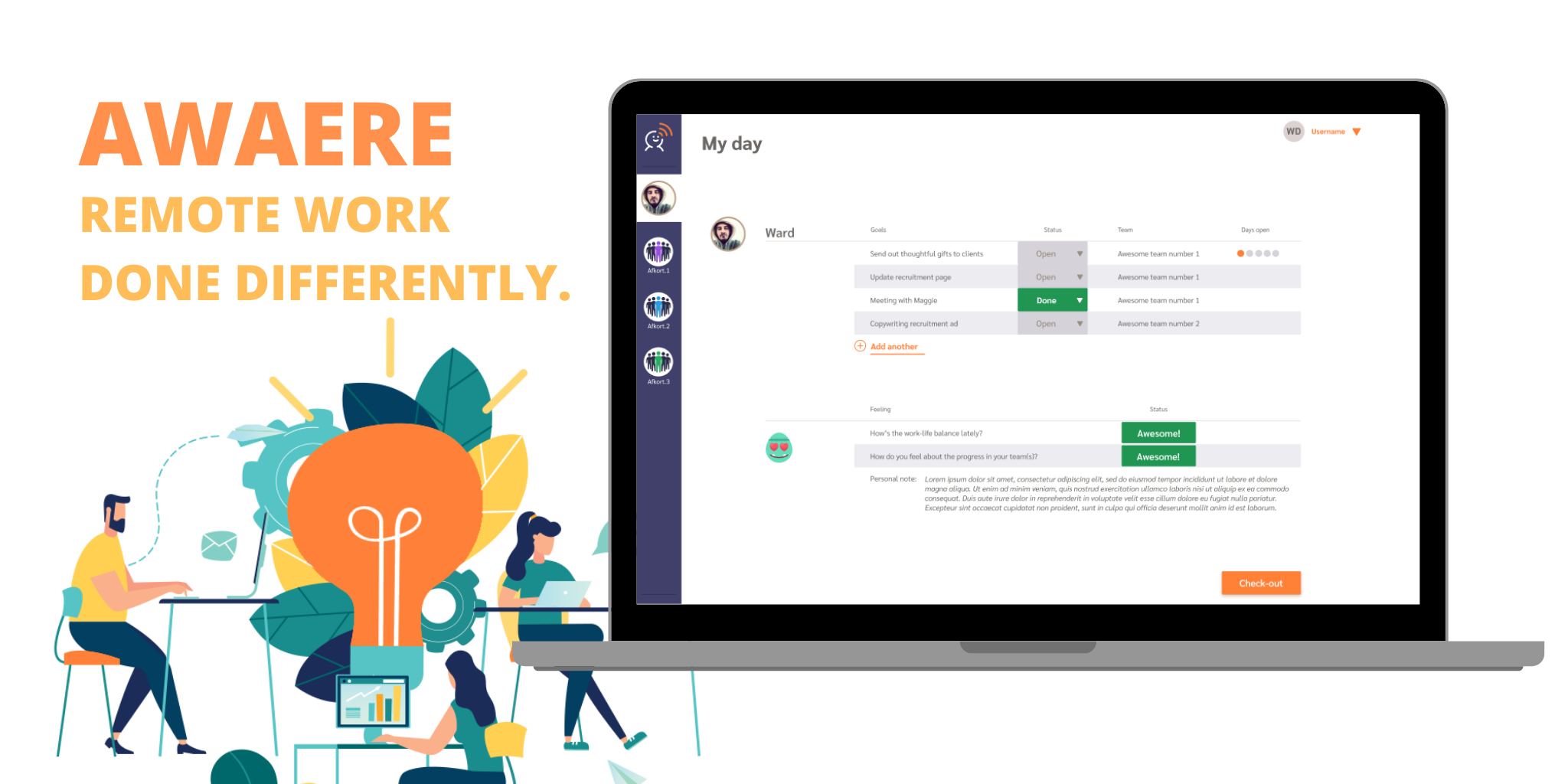

Awaere: Boosting your team collaboration and making remote work better together.

By Wim Van Emelen on 19 May 2020

The story behind Awaere: remote work done differently

By Kirsten Vermeulen on 06 May 2020

The COVID-19 crisis has the world working from home, in improvised office spaces. Evidently, this sudden change comes with numerous challenges. Confident that teleworking will continue to play an important role in the post COVID-19 era, AE has kickstarted Awaere, a digital platform that helps remote teams deal with the challenges of telework in various ways.

We interviewed AE Consultants Wim Van Emelen and Joris Hias to learn more about the story behind this innovative platform.

40 days of corona crisis - an enterprise architect's point of view

By Stef Devos on 29 April 2020

Although there are many ways to fill in an enterprise architect position in your organization, it is often associated with strategic and long-term planning rather than intervening on an operational level during a crisis. Stef Devos, Director Portfolio Management at AE, explains how Enterprise Architecture and Crisis Management go hand in hand during this crisis.

AE's take on corona? Focus on the now, but keep looking forward together

By Stijn Vander Plaetse on 06 April 2020

At AE, gaining progressive insights has always been a driver for our business. Yet decisions have never seemed to be overtaken by time so quickly as they are now.

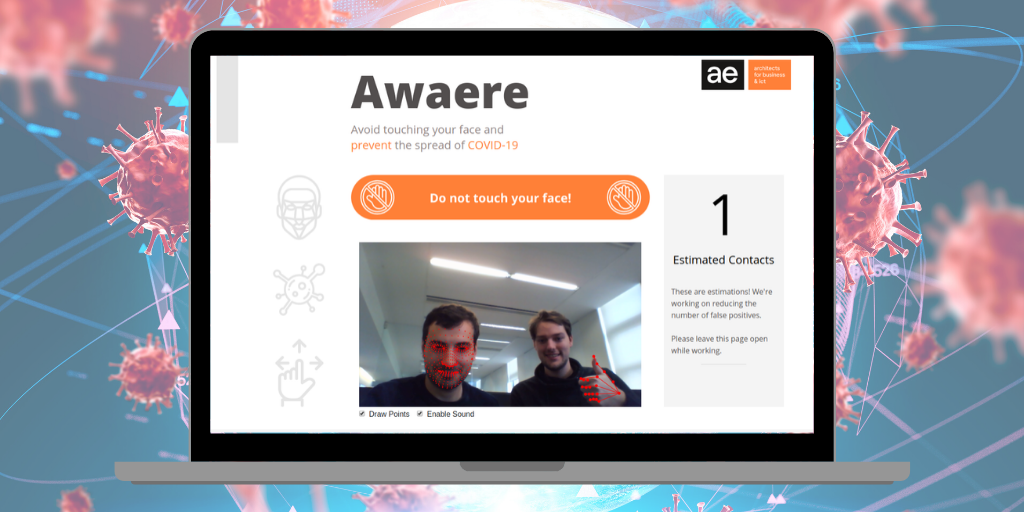

Avoid touching your face while working. Use Face.Awaere.

By Frederik Hautain on 15 March 2020

On Thursday March 12 the coronavirus crisis exploded in Belgium. That same day, a team of AE innovation experts started to explore how technology could help prevent COVID-19 contamination among colleagues.